Submitted next is information to assist objective observers in assembling categories of awareness about this subject.

Artificial neural network

From Wikipedia, the free encyclopedia

(Redirected from Neural network)

"Neural network" redirects here. For networks of living neurons, see Biological neural network. For the journal, see Neural Networks (journal). For the evolutionary concept, see Neutral network (evolution).

"Neural computation" redirects here. For the journal, see Neural Computation (journal).

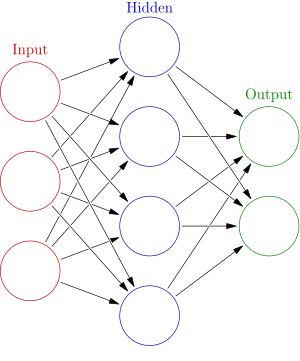

An artificial neural network is an interconnected group of nodes, akin to the vast network of neurons in a brain. Here, each circular node represents an artificial neuron and an arrow represents a connection from the output of one neuron to the input of another.

Artificial neural networks (ANNs) or connectionist systems are computing systems inspired by the biological neural networks that constitute animal brains. Such systems learn (progressively improve performance) to do tasks by considering examples, generally without task-specific programming. For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat" and using the analytic results to identify cats in other images. They have found most use in applications difficult to express in a traditional computer algorithm using rule-based programming.

An ANN is based on a collection of connected units called artificial neurons, (analogous to axons in a biological brain). Each connection ( synapse) between neurons can transmit a signal to another neuron. The receiving (postsynaptic) neuron can process the signal(s) and then signal downstream neurons connected to it. Neurons may have state, generally represented by real numbers, typically between 0 and 1. Neurons and synapses may also have a weight that varies as learning proceeds, which can increase or decrease the strength of the signal that it sends downstream. Further, they may have a threshold such that only if the aggregate signal is below (or above) that level is the downstream signal sent.

Typically, neurons are organized in layers. Different layers may perform different kinds of transformations on their inputs. Signals travel from the first (input), to the last (output) layer, possibly after traversing the layers multiple times.

The original goal of the neural network approach was to solve problems in the same way that a human brain would. Over time, attention focused on matching specific mental abilities, leading to deviations from biology such as backpropagation, or passing information in the reverse direction and adjusting the network to reflect that information.

Neural networks have been used on a variety of tasks, including computer vision, speech recognition, machine translation, social networkfiltering, playing board and video games, medical diagnosis and in many other domains.

As of 2017, neural networks typically have a few thousand to a few million units and millions of connections. Their computing power is similar to a worm brain,[ citation needed] several orders of magnitude simpler than a human brain. Despite this, they can perform functions (e.g., playing chess) that are far beyond a worm's capacity.

Contents [hide]

1History 1.1Hebbian learning 1.2Backpropagation 1.3Hardware-based designs 1.4Contests 1.5Convolutional networks 2Models 2.1Network function 2.2Learning 2.3Learning paradigms 2.4Learning algorithms 3Variants 3.1 Group method of data handling 3.2Convolutional neural networks 3.3Long short-term memory 3.4Deep reservoir computing 3.5Deep belief networks 3.6Convolutional deep belief networks 3.7Large memory storage and retrieval neural networks 3.8Deep Boltzmann machines 3.9Stacked (de-noising) auto-encoders 3.10Deep stacking networks 3.11Tensor deep stacking networks 3.12Spike-and-slab RBMs 3.13Compound hierarchical-deep models 3.14Deep coding networks 3.15Deep Q-networks 3.16Networks with separate memory structures 4Multilayer kernel machine 5Use 6Applications 6.1Neuroscience 7Theoretical properties 7.1Computational power 7.2Capacity 7.3Convergence 7.4Generalization and statistics 8Criticism 8.1Training issues 8.2Theoretical issues 8.3Hardware issues 8.4Practical counterexamples to criticisms 8.5Hybrid approaches 9Types 10Gallery 11See also 12References 13Bibliography 14External links

History[ edit] Warren McCulloch and Walter Pitts [1] (1943) created a computational model for neural networks based on mathematics and algorithms called threshold logic. This model paved the way for neural network research to split into two approaches. One approach focused on biological processes in the brain while the other focused on the application of neural networks to artificial intelligence. This work led to work on nerve networks and their link to finite automata. [2]

Hebbian learning[ edit]In the late 1940s, D.O. Hebb [3] created a learning hypothesis based on the mechanism of neural plasticity that is now known as Hebbian learning. Hebbian learning is an unsupervised learning rule. This evolved into models for long term potentiation. Researchers started applying these ideas to computational models in 1948 with Turing's B-type machines.

Farley and Clark [4] (1954) first used computational machines, then called "calculators", to simulate a Hebbian network. Other neural network computational machines were created by Rochester, Holland, Habit and Duda [5] (1956).

Rosenblatt [6] (1958) created the perceptron, an algorithm for pattern recognition. With mathematical notation, Rosenblatt described circuitry not in the basic perceptron, such as the exclusive-or circuit that could not be processed by neural networks at the time. [7]

In 1959, a biological model proposed by Nobel laureates Hubel and Wiesel was based on their discovery of two types of cells in the primary visual cortex: simple cells and complex cells [8]

The first functional networks with many layers were published by Ivakhnenko and Lapa in 1965, becoming the Group Method of Data Handling. [9] [10] [11]

Neural network research stagnated after machine learning research by Minsky and Papert (1969), [12] who discovered two key issues with the computational machines that processed neural networks. The first was that basic perceptrons were incapable of processing the exclusive-or circuit. The second was that computers didn't have enough processing power to effectively handle the work required by large neural networks. Neural network research slowed until computers achieved far greater processing power.

Backpropagation[ edit] Artificial intelligence shifted in the late 1980s from high-level (symbolic), characterized by expert systems with knowledge embodied in if-then rules, to low-level (sub-symbolic) machine learning, characterized by knowledge embodied in the parameters of a cognitive model.

A key advance was Werbos' (1975) backpropagation algorithm that effectively solved the exclusive-or problem, and more generally accelerated the training of multi-layer networks. [7][ further explanation needed]

In the mid-1980s, parallel distributed processing became popular under the name connectionism. Rumelhart and McClelland (1986) described the use of connectionism to simulate neural processes. [13]

Support vector machines and other, much simpler methods such as linear classifiers gradually overtook neural networks in machine learning popularity.

Earlier challenges in training deep neural networks were successfully addressed with methods such as unsupervised pre-training, while available computing power increased through the use of GPUs and distributed computing. Neural networks were deployed on a large scale, particularly in image and visual recognition problems. This became known as " deep learning", although deep learning is not strictly synonymous with deep neural networks.

In 1992, max-pooling was introduced to help with least shift invariance and tolerance to deformation to aid in 3D object recognition. [14] [15] [16]

The vanishing gradient problem affects many-layered feedforward networks that use backpropagation and also recurrent neural networks. [17] [18] As errors propagate from layer to layer, they shrink exponentially with the number of layers, impeding the tuning of neuron weights that is based on those errors, particularly affecting deep networks.

To overcome this problem, Schmidhuber's multi-level hierarchy of networks (1992) pre-trained one level at a time by unsupervised learning, fine-tuned by backpropagation. [19] Behnke (2003) relied only on the sign of the gradient ( Rprop) [20] on problems such as image reconstruction and face localization.

Hinton et al. (2006) employed learning the distribution of a high-level representation using successive layers of binary or real-valued latent variables with a restricted Boltzmann machine [21]to model each layer. Once sufficiently many layers have been learned, the deep architecture may be used as a generative model by reproducing the data when sampling down the model (an "ancestral pass") from the top level feature activations. [22] [23] In 2012, Ng and Dean created a neural network that learned to recognize higher-level concepts, such as cats, only from watching unlabeled images taken from YouTube videos. [24]

Hardware-based designs[ edit]Computational devices were created in CMOS, for both biophysical simulation and neuromorphic computing. Nanodevices [25] for very large scale principal components analyses and convolution may create a new class of neural computing because they are fundamentally analog rather than digital (even though the first implementations may use digital devices.) [26]Ciresan and colleagues (2010) [27] in Schmidhuber's group showed that despite the vanishing gradient problem, GPUs makes back-propagation feasible for many-layered feedforward neural networks.

Contests[ edit]Between 2009 and 2012, recurrent neural networks and deep feedforward neural networks developed in the Schmidhuber's research group, winning eight international competitions in pattern recognition and machine learning. [28] [29] For example, the bi-directional and multi-dimensional long short-term memory (LSTM) [30] [31] [32] [33] of Graves et al. won three competitions in connected handwriting recognition at the 2009 International Conference on Document Analysis and Recognition (ICDAR), without any prior knowledge about the three languages to be learned. [34] [35]

Ciresan and colleagues won pattern recognition contests, including the IJCNN 2011 Traffic Sign Recognition Competition, [36] the ISBI 2012 Segmentation of Neuronal Structures in Electron Microscopy Stacks challenge [37] and others. Their neural networks were the first pattern recognizers to achieve human-competitive or even superhuman performance [38] on benchmarks such as traffic sign recognition (IJCNN 2012), or the MNIST handwritten digits problem.

Researchers demonstrated (2010) that deep neural networks interfaced with a hidden Markov model with context-dependent states that define the neural network output layer can drastically reduce errors in large-vocabulary speech recognition tasks such as voice search.

GPU-based implementations [39] of this approach won many pattern recognition contests, including the IJCNN 2011 Traffic Sign Recognition Competition, [40] the ISBI 2012 Segmentation of neuronal structures in EM stacks challenge, [41] the ImageNet Competition [42] and others.

Deep, highly nonlinear neural architectures similar to the neocognitron [43] and the "standard architecture of vision", [44] inspired by simple and complex cells were pre-trained by unsupervised methods [45] [46] by Hinton. [45] [47] A team from his lab won a 2012 contest sponsored by Merck to design software to help find molecules that might identify new drugs. [48]

Convolutional networks[ edit]As of 2011, the state of the art in deep learning feedforward networks alternated convolutional layers and max-pooling layers, [39] [49] topped by several fully or sparsely connected layers followed by a final classification layer. Learning is usually done without unsupervised pre-training.

Such supervised deep learning methods were the first artificial pattern recognizers to achieve human-competitive performance on certain tasks. [50]

ANNs were able to guarantee shift invariance to deal with small and large natural objects in large cluttered scenes, only when invariance extended beyond shift, to all ANN-learned concepts, such as location, type (object class label), scale, lighting and others. This was realized in Developmental Networks (DNs) [51] whose embodiments are Where-What Networks, WWN-1 (2008) [52] through WWN-7 (2013). [53]

Models |